How to Conduct a Technical Interview

You've got 60 minutes, a candidate on the other side of the screen, and a hiring decision that could make or break your next product release. Most technical interviews either waste that time with trivia questions or fail to reveal whether someone can actually do the job.

Vladan Ćetojević

Summary

A technical interview is a structured, skills-based evaluation that assesses a candidate's technical problem-solving, communication, and judgment. Not trivia recall

Purpose: Evaluate job-relevant technical competence

Common formats: Live coding, system design, take-home projects, technical Q&A

Core stages: Screening → Assessment → Live interview → Panel debrief

Primary benefit: Strong predictor of on-the-job performance

Best for: Engineering, data, DevOps, QA, technical leadership roles

Setup difficulty: Moderate, requires preparation and a scoring rubric

Alternatives: Paid trials, portfolio reviews, structured references

Conducting a technical interview means running a structured, skills-based evaluation that tests a candidate's real-world problem-solving ability, communication style, and technical depth, in that order. Done right, it's the single most reliable predictor of on-the-job performance you have.

What Is a Technical Interview?

A technical interview is a structured hiring evaluation that assesses a candidate's domain-specific skills, problem-solving approach, and engineering judgment through live exercises, take-home assignments, or system design discussions. It is typically used for software development, data engineering, DevOps, and other technical roles where skills cannot be assessed through a resume alone.

Technical interviews work by presenting candidates with real or simulated problems, then evaluating both the quality of their solutions and their thinking process. The goal isn't to catch people out. It's to observe how they work.

Why Most Technical Interviews Fail

The majority of technical interviews fail candidates and companies equally, and the reason is almost always a lack of structure. A hiring manager who improvises questions, changes the evaluation criteria mid-interview, or relies on "gut feel" isn't conducting a technical interview. They're having a technical conversation, which is a very different thing.

Research from Google's Project Oxygen, published through their re:Work program, found that unstructured interviews predict job performance only marginally better than chance. Structured interviews, with defined questions, consistent scoring, and role-specific rubrics, are two to three times more predictive. For remote hiring, especially if you're from US and evaluating candidates from Eastern Europe or other popular countries for hiring developers, structure isn't optional. It's the entire foundation.

The fix isn't complicated. It starts with knowing exactly what you're testing, designing exercises that mirror real work, and scoring every candidate against the same criteria. FatCat Remote builds this structure into every technical hire we facilitate, because inconsistent interviews are one of the most expensive mistakes a company can make.

How to Conduct a Technical Interview: Step-by-Step

Getting this right takes preparation before the interview starts, discipline during it, and an honest debrief after. Here's the full process.

Step 1: Define the Role's Technical Requirements

Before writing a single interview question, list the 5 to 7 technical skills the role genuinely requires. Not the skills that would be nice to have, the ones someone will use in week one.

For a backend developer, that might mean REST API design, SQL query optimization, and debugging under production constraints. Similarly, for a data analyst, it could be SQL fluency, Python for data manipulation, and experience explaining findings to non-technical stakeholders.

Write these down and rank them. This list becomes your scoring rubric. Every interview question or exercise you design should map directly back to at least one item on it.

Step 2: Choose the Right Assessment Format

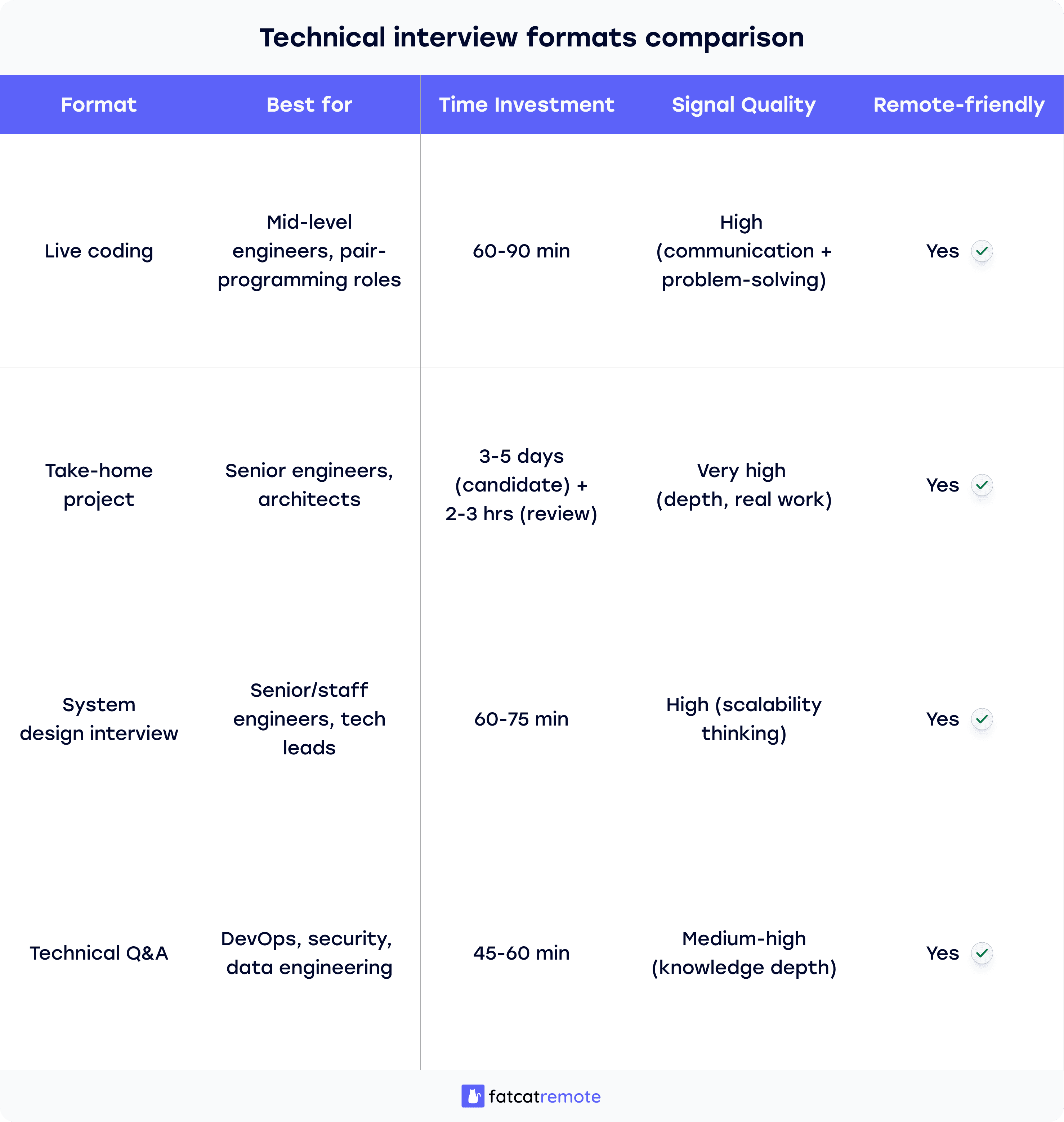

The format of your technical interview should match the role and the type of thinking it demands. Four formats work reliably well:

Live coding works best for roles where real-time problem-solving speed and communication matter. Think of software engineering positions where pair-programming is common.

Take-home projects work better for senior roles where depth and architectural thinking matter more than speed. Give candidates 3 to 5 days and a realistic scope (2 to 4 hours of work). Be explicit: don't assign unpaid work disguised as an "assessment."

System design interviews test how candidates think at scale. It’s useful for senior engineers, architects, and technical leads. Ask candidates to design something like "a URL shortener that handles 10 million requests per day" and evaluate their trade-off reasoning.

Technical Q&A suits roles where applied knowledge matters more than live coding, DevOps, security, and data engineering. Ask scenario-based questions tied to real situations the role will encounter.

Step 3: Write Your Questions Before the Interview

Never wing the question phase. Write 4 to 6 questions ahead of time, organized by difficulty from moderate to hard. For each question, write down what a strong answer looks like before you hear any candidate responses.

This pre-writing step matters more than most interviewers realize. It forces you to think through the expected solution, anticipate alternative approaches, and separate "answers I personally prefer" from "correct approaches." A candidate who solves a problem differently than you would isn't wrong, unless their approach has a genuine flaw.

Tie each question to your rubric from Step 1. If a question doesn't test a skill on your list, cut it.

Step 4: Set Up the Interview Environment

Send candidates a clear technical setup guide at least 48 hours before the interview, in which you’ll specify at least:

What language can they work in (or if language is flexible).

Whether they can use documentation.

What the interview will cover at a high level.

Surprises favor interviewers, not candidates. Reducing setup friction shows respect for the candidate's time and gives you a cleaner signal on their actual skills rather than their ability to troubleshoot Zoom under pressure.

For remote interviews, you can use a shared coding environment as an alternative to asking candidates to share their screen. CoderPad, CodeSignal, and Codility all support this. You get a cleaner recording, a cleaner evaluation, and the candidate isn't fighting their own environment.

Step 5: Open With a Structured Warm-Up

The first 5 to 10 minutes of a technical interview set the tone for everything that follows. Don't start with a hard-coding problem cold. Start with a brief role context, a quick introduction from your side, and one low-stakes technical question that gets the candidate talking.

“Tell me about a recent project where you had to make a meaningful decision. How did you evaluate your options and what was your decision?”

This isn't a trick question. It's a calibration question. You're watching how they structure their thinking, not testing a specific skill.

Step 6: Run the Core Technical Exercise

Now move to your primary assessment. Whether it's a live coding problem, a system design question, or a scenario walkthrough, the mechanics are the same.

State the problem clearly and completely before the candidate starts. Let them ask clarifying questions; this is part of what you're evaluating! A candidate who starts coding immediately without clarifying ambiguous requirements is showing you something important.

As they work, ask open questions: "What are you considering here?" or "What trade-offs are you weighing?" You want to hear their reasoning, not just watch their output. A candidate who builds an elegant solution in silence tells you far less than one who talks through their decisions, even if their code is slightly messier.

Offer a prompt instead: "You mentioned you'd need to handle edge cases, which ones are you thinking about first?"

The way a candidate responds to a hint tells you as much as the answer itself.

Step 7: Score During the Interview, Not After

Use your rubric in real time. After each segment, make brief notes against your criteria. For example, "handled null input case without prompting, strong" or "Needed two hints on time complexity, moderate."

Don't wait until after the interview to score. Memory is unreliable, and the most recent thing a candidate said will disproportionately color your recall of everything before it. The rubric exists precisely to counter this.

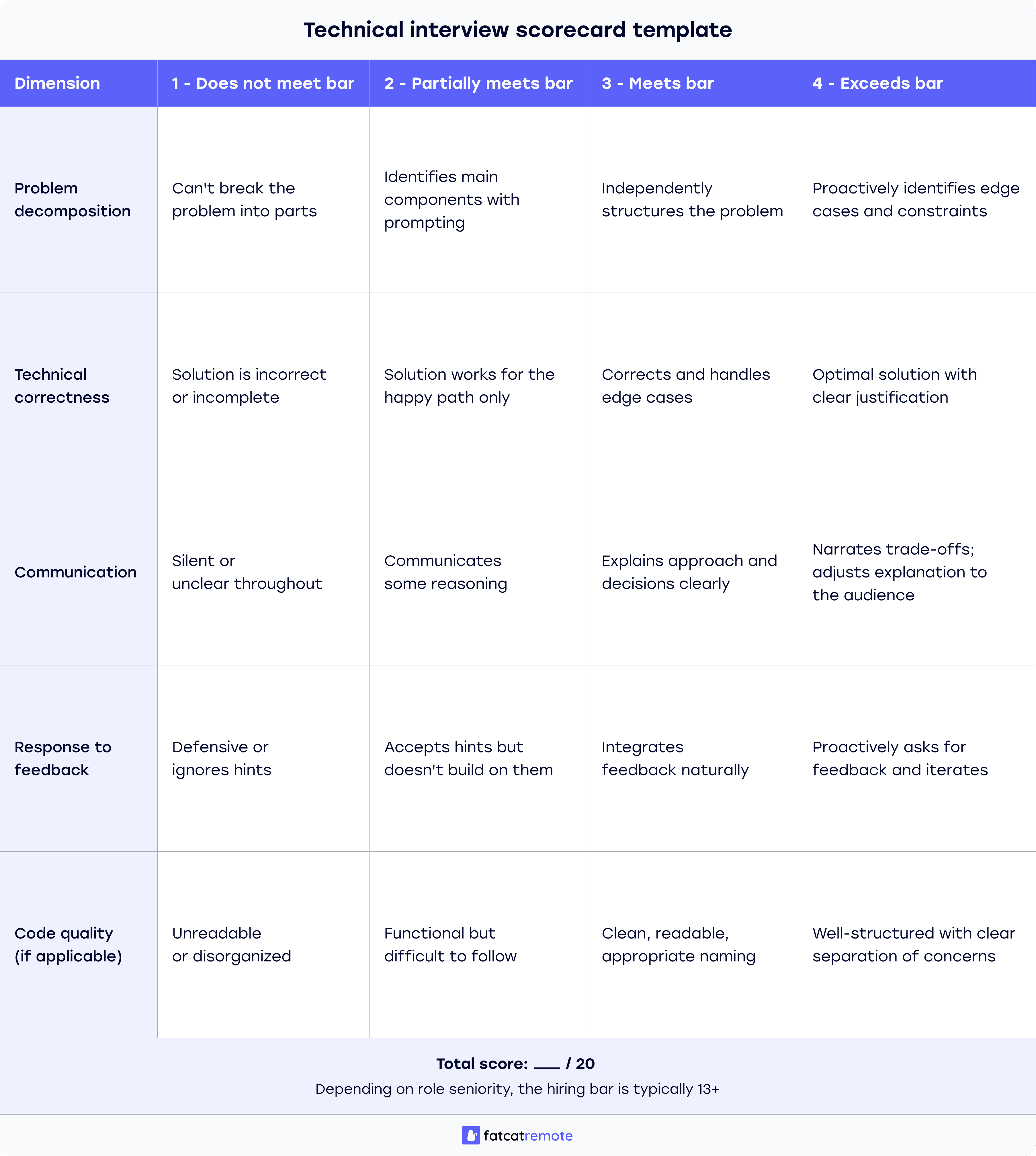

A simple 1 to 4 works:

1 - Does not meet bar

2 - Partially meets bar

3 - Meets bar

4 - Exceeds bar

Avoid 5-point scales, which tend to collapse into a 3-point scale in practice because people avoid the extremes.

Technical Interview Scorecard (Template)

Use this rubric for every candidate to maintain consistency across your interview panel.

Step 8: Close With Candidate Questions

Reserve the last 10 minutes for the candidate's questions. This matters for two reasons: it gives you a final read on how they think about roles and work environments, and it signals respect.

A candidate who asks detailed questions about team process, technical debt, and deployment frequency is showing you the same curiosity and judgment you want in the role. A candidate who has no questions is either disengaged or hasn't been given enough information to ask good ones, worth distinguishing.

If there's a real challenge in the codebase or team, name it. Candidates who accept roles with a clear-eyed view of the challenges retain at a much higher rate than those who discover problems after joining.

Step 9: Debrief as a Team Before Making a Decision

Gather everyone who participated in the interview loop within 24 hours, while the recall is still fresh. Each interviewer shares their score and the evidence behind it, not advocating for a candidate, and not revealing their decision before others have shared theirs.

This debrief format, borrowed from structured decision-making practices used at companies like Amazon, prevents the most common bias in hiring: the loudest or most senior person anchoring the group's view before independent assessments are made.

→ "I just didn't feel like they'd be a culture fit" isn't evidence.

→ "They struggled to explain their design decisions under follow-up questions across three separate segments" is evidence.

What to Test at Each Seniority Level

Not every technical interview should look the same. The skills that predict success in a junior engineer differ significantly from those that predict success in a staff engineer or technical lead.

For Junior Engineers (0-3 Years Experience)

What to test: Core data structures, basic algorithm fluency, readable code, ability to break a problem into smaller steps, and willingness to ask for help when stuck.

What not to penalize: Slower execution speed, reliance on documentation, unfamiliarity with your specific stack. These are learnable. Problem-solving instinct and communication clarity are harder to teach.

Good exercise format: A moderately complex coding problem with a clear solution path, plus one follow-up question asking them to optimize or handle an edge case they missed.

For Mid-Level Engineers (3-7 Years Experience)

What to test: Code design judgment, debugging under realistic constraints, ability to articulate trade-offs, experience integrating with external systems or APIs, and ownership of a feature end-to-end.

What to weigh heavily: Communication quality. Mid-level engineers need to work independently and communicate status and blockers clearly. Watch for this in how they narrate their approach.

Good exercise format: A take-home project or a live problem with ambiguous requirements that requires clarifying questions before coding starts.

For Senior Engineers and Technical Leads (7+ Years)

What to test: System design depth, mentorship instincts, ability to evaluate multiple architectural approaches with honest trade-offs, and experience leading technical decisions under ambiguity.

What not to over-index on: Raw coding speed or memorized algorithm patterns. A senior engineer who struggles to recall textbook implementations but can design scalable systems and reason through trade-offs is usually the stronger hire.

Good exercise format: A system design discussion plus a scenario question about a past technical failure and how they handled it.

Who Should Be in the Interview Loop?

The composition of your interview panel matters as much as the questions you ask. A common mistake is filling the panel with people who all evaluate the same dimension of the role.

A well-structured technical interview loop for a software engineering role typically includes:

Hiring manager: Evaluating role fit and work style

Technical interviewer: At or above the candidate's level, so they can evaluate skills.

(Optional) Cross-functional stakeholder: Evaluating communication and collaboration.

Three to five interviews are the practical ceiling before the hiring process becomes exhausting for both sides, with diminishing informational return.

For remote roles specifically, the kind that FatCat Remote specializes in, consider adding one interviewer who evaluates async communication skills and remote work behaviors explicitly.

Can this candidate write a clear technical summary?

Do they ask good questions over text?

Do they demonstrate self-direction?

These traits predict remote performance better than most technical skills.

Common Mistakes When Conducting Technical Interviews

Even well-intentioned hiring managers make predictable errors when running technical interviews. Most of them stem from a lack of structure rather than bad judgment.

Mistake 1: Testing Trivia Instead of Judgment

Interviewers default to questions with clear right/wrong answers because they're easier to evaluate. "What's the time complexity of a merge sort?" is simple to grade. "How would you approach reducing the latency in our payment processing pipeline?" requires evaluating reasoning, not recall.

For every question on your list, ask yourself: "Does this test knowledge someone uses regularly on the job, or knowledge they could look up in 30 seconds?" If it's the latter, cut it. The goal is to evaluate how candidates think with information, not whether they've memorized it.

Mistake 2: No Consistent Rubric Across Candidates

Interviewers trust their experience to evaluate candidates fairly, not realizing that "experience" often means "pattern-matching to candidates who look or sound like successful past hires."

Write your scoring rubric before the first interview. Review it before each subsequent one. Every candidate gets evaluated against the same criteria, not against each other.

Mistake 3: Talking More Than the Candidate

Interviewers fill silences, explain context the candidate didn't ask for, or inadvertently steer candidates toward the answer they're looking for.

Target a 70/30 split. The candidate should be talking roughly 70% of the time. After you ask a question, stop talking. Silence is data. A candidate who needs 20 seconds to formulate a response is often a candidate who is actually thinking carefully.

Mistake 4: Skipping the Debrief

Everyone is busy. The interview went well (or clearly badly). The decision feels obvious. The debrief feels like a formality.

Run the debrief anyway. The decisions that seem obvious are the ones most likely to harbor unchecked bias. The debrief is where "obvious" decisions get stress-tested.

Delegate your technical hiring process

Skip sourcing, screening, and structured evaluations and meet only candidates who’ve already cleared a meaningful technical bar with FatCat Remote.

Conclusion

I've seen companies run technical interviews that took months, involved six rounds, and still resulted in a bad hire, because every round tested the same thing and no one agreed on the criteria beforehand.

I've also seen a single well-designed 90-minute interview reveal more about a candidate than a two-month trial period.

The difference is always preparation. Build the rubric before the first interview. Write your questions the day before, not the morning of. Debrief as a panel before your opinions have time to blur together.

Share this article: